Why AI agents are still not truly autonomous

Table of Contents

Introduction

Just one year can entirely change industry perception. Consider the 2025 to 2026 transition of Agentic AI, a pivotal shift in the enterprise AI narrative. The previous year was inarguably the year of Agentic AI Hype, as evidenced by a gold rush to build and adopt AI Agents for complete autonomy, sometimes even before validating the use case. This momentum was validated by Gartner’s projection that 40% of enterprise applications would successfully embed task-specific AI Agents by the end of 2026. But as we move through the year, the artificial intelligence limitations associated with these agents seem to highlight a sobering reality.

The same Gartner report also warns that 40% of these ambitious Agentic AI projects might be at risk of cancellation for two primary reasons: a lack of clear ROI and significant governance gaps.

This is because AI Agents are still operating in a “semi-autonomous” environment. This blog discusses the narrative that while AI Agents have mastered the art of conversation and execution, they have yet to achieve the standard of self-correction and governance, the two absolutes for true autonomy in high-stakes enterprise environments.

What is the Difference Between Automation and True AI Agent Autonomy?

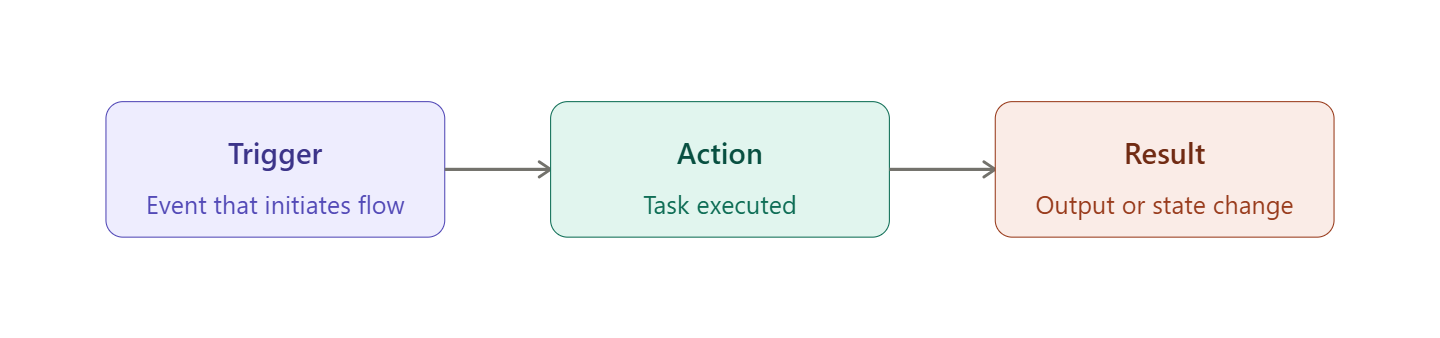

Automation is a linear, rule-based system that follows an if X then Y logic when completing an action. That also means automation tends to break down if it comes across an unprogrammed rule or instruction.

Intelligence: It does what it’s told every time, without fail.

Example: A script that automatically saves every email attachment to a folder. If the email has a link instead of an attachment, the automation does nothing.

What is an AI Agent?

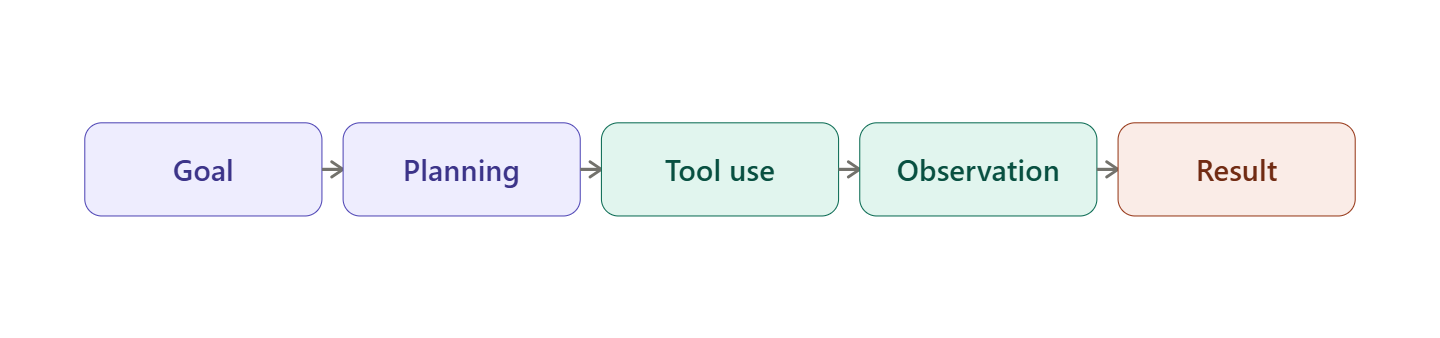

On the other hand, an AI Agent is a complete, goal-oriented reasoning system. It can look at what’s going on, change its actions, and do what needs to be done instead of following a set of rules or instructions. It uses a Large Language Model (LLM) as its brain to find the best way to get there. It stops, thinks, and tries a different way if it hits something.

Intelligence: Very High. It judges its environment and makes decisions based on that judgment.

Example: You tell the AI Agent, “Get all the invoices from my email into the accounting folder.” If it finds a link instead of an attachment, it follows the link, logs in to the portal, downloads the file, and then saves it.

How AI Agents Work?

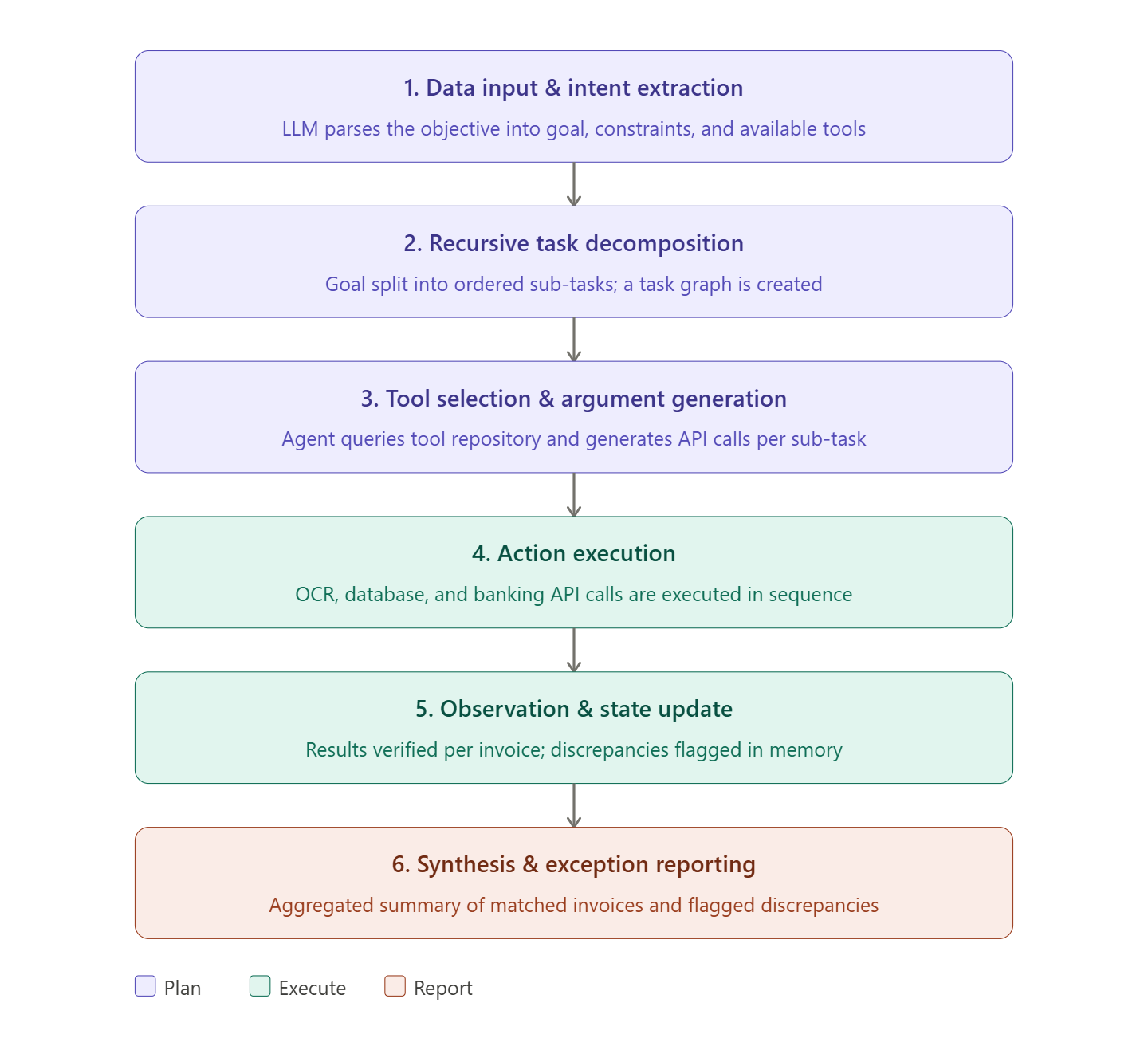

In technical terms, an AI Agent works like a stateful processor. It follows a deterministic and chronological cycle. Let’s understand this in a banking setup.

Data Input Processing and Intent Extraction

The AI Agent receives a clear objective (for e.g., reconcile these 500 invoices against our bank statement). The instruction is parsed by the LLM (AI Agent’s brain) to identify the goal (invoice vs bank statement), the Constraints (refer to the folder having bank statement only), and the Available Tools.

Recursive Task Decomposition

The AI Agent breaks the goal into a smaller linear or hierarchical sequence of sub-tasks. For instance, sub-tasks for this could look like:

- List all files in the ‘Invoices’ directory.

- Read the total amount from each PDF.

- Query the Bank API for matching transactions.

- Flag discrepancies

The AI Agent then creates a Task Graph for execution.

Tool Selection and Argument Generation

For each linear sub-task, the AI Agent decides which ‘hand’ or tool to utilize. It looks into a Tool Repository (a list of available APIs like Python Interpreter, Search, or SQL Database) and generates the corresponding code/API call.

Action Execution

After selecting all the tools and calling out relevant APIs, the AI Agent executes the command. It calls an OCR Tool to read the first PDF. It then calls a Database Tool or a Banking API to find that specific transaction.

Observation and State Update

After processing every single invoice, the AI Agent looks at the result. If it finds a match, it moves to the next. If it finds a $500 invoice but only a $450 bank entry, it observes a discrepancy. Instead of stopping, it updates its internal memory to flag this specific invoice for the final report.

Synthesis and Exception Reporting

Once the agent has gone through all 500 invoices, it aggregates the data. It doesn’t just give you 500 lines of data, but summarizes the state. This could look like: 495 invoices matched perfectly. 5 discrepancies found. Click here to review the 5 flags.

How to Measure Agentic AI Autonomy?

For all C-suite leaders, managing what cannot be measured (Agentic AI autonomy, in this case) is a big challenge. It’s very different from measuring accuracy and is often not quantifiable. But there are certain methods that can bring us closer to doing so.

In a professional enterprise setting, autonomy can be measured by the length of the tether between the human expert and the AI Agent. We track this through three specific metrics:

- Handoff Rate: How many times per 100 tasks does the agent stop and ask a human for clarification or permission?

- Sub-task Completion vs. Global Goal Success: How many sub-tasks did the AI Agent complete, vs. if it was able to complete the entire goal/project?

- Correction Density: How many times did a human have to “edit” the agent’s work after it was finished?

Let’s get detailed insight by exploring Anthropic’s Internal Autonomy Survey.

Now that AI Agents are widely adopted, understanding the level of autonomy people are granting them is crucial for industry leaders. Anthropic analyzed millions of interactions with their Claude models to see how users actually interact with AI Agents in the real world. According to their findings:

- As agents become more capable, users have stopped approving every sub-step (e.g., “Yes, you can open that PDF”) and are moving toward passive monitoring. This shift is the first quantifiable sign of increasing autonomy. Nearly 20% of sessions are run on full auto-approve, rising to over 40% as users gain experience.

- If a Claude agent hits a dead end, realizes it made a wrong decision, and backtracks without presenting it to the user, its “Autonomy Score” increases. This internal loop is the holy grail of Agentic AI.

- Highly autonomous agents (with good Autonomy Scores) are actually more likely to ask for help when they encounter a high-risk outlier. By measuring the quality of when an AI Agent stops, Anthropic was able to determine if the agent is truly reasoning or just making blind guesses.

- AI Agents are being used in risky domains too, but not yet at scale. They scored autonomy risks on a 1–10 scale, with a risk score of 1 representing almost no consequences if something went wrong and 10 representing substantial harm. For domains like biochemical manufacturing or healthcare, risk remains high (around 4.7-4.8), and autonomy remains low (averaging ~ 3.0)

What are the Architectural and Strategic Barriers to True Autonomy?

The following limitations of AI Agents keep them away from working truly autonomously:

Compounding Error Rates

It is currently nearly impossible to mathematically control an AI Agent’s error rates over the long run horizon. This is because of how an AI Agent operates. It works on autoregressive logic, in which each step’s success/failure is determined by probability. With each, there is some ‘probabilistic decay.’ For example, if an AI Agent has a 95% success rate per step, its efficacy in a 50-step workflow (let’s say) is statistically near zero ( P ~ 7.7%) if left alone without any manual intervention.

Strategic Barrier: This kind of Agent operation creates an oversight tax if human checkpoints are not put in place. And if a highly paid expert must audit every fifth step to prevent total process collapse, the ROI of the AI Agent is overshadowed by the cost of the supervisor.

Absence of Self-Calibration

True autonomy calls for a system that knows when to stop. And as of now, current AI Agent architectures are designed as 24/7, always-on systems that are always ready to assist, even at the cost of being inaccurate. Without the inherent ability to calibrate their decisions and actions on their own, AI Agents sometimes end up pursuing flawed objectives with high confidence, even in uncertain scenarios. This can lead to irreversible operational errors.

The Air Canada Baggage incident is a classic example. Their chatbot (an early-stage AI Agent that had access to internal client data) provided Jack Moffatt, a passenger, with a fabricated bereavement fare policy. It generated a refund rule that did not exist in the official documentation, kept going without knowing when to stop, and handed the conversation over to an officer/employee. This resulted in Air Canada having to pay $812.02 in damages and fees.

Strategic Barrier: Operational risk management demands a predictable fail-safe, something that’s still missing in many AI Agents. Until AI Agents can output a verifiable confidence score and automatically stop when it exceeds a defined threshold, they cannot be granted complete, ungoverned freedom.

Fragmented Enterprise Data

Unlike LLMs, which can work well with a basic training run and RAG-based data retrieval from a store, AI Agents work in environments. They work across networks, APIs, and databases (internal and external). At present, a significant portion of enterprise data is still siloed in these elements, with little to no interoperability. Reports indicate that 54% of critical enterprise data remains trapped in distributed legacy systems.

Hence, it remains inaccessible to AI Agents, causing them to work with blind spots. This results in context starvation, preventing agents from understanding the broader impact of their actions.

Lack of Inter-Agent Interoperability

True autonomy relies on a swarm of specialized AI Agents that work collaboratively, even across different organizations. But currently, there is no common language or TCP/IP equivalent for these interactions. An agent built on OpenAI’s framework cannot inherently negotiate with or hand off a task to one built on Google’s Veo or Anthropic’s Claude 3.5. This fragmentation forces humans to act as the manual link, translating and authorizing data transfers and communications between disparate AI systems.

The Strategic Barrier: This leads to orchestration lag and friction. Without a standard handshake protocol, there cannot be cross-platform coordination. As a result, many are forced into vendor lock-ins and human-mediated workflows.

Accountability Vacuum and the Lack of a Legal Definition

Another reason why AI Agents are still not truly autonomous is the lack of accountability. Humans are liable for legal crises arising from their actions. But there’s no equivalent for an AI Agent, because the black-box problem still persists in agentic loops. Consequently, tracing a specific failure point in a chain of autonomous tool calls is technically challenging for real-time auditing.

Moreover, there are still no auditable action trails for several AI Agents.

The Strategic Barrier: Legal Personhood. If an AI Agent signs a contract or violates GDPR/CCPA’s data protection laws, who will be liable? The AI Agent, or its creator, or the human executives? Several leaders still refuse to grant full autonomy until frameworks exist that satisfy regulatory transparency requirements.

How Can We Bridge the Gap Between Automation and True Autonomy?

Build an Agentic Scaffolding Layer

The first step toward real freedom is to stop using LLM-based Zero-Shot prompting (asking an LLM to do a task with just one prompt) and start using Scaffolding. You can do this by making a code-based framework that makes the AI Agent follow a set path with guardrails and checkpoints. These checkpoints will make sure that the AI Agent stops and checks its output against a set of rules before moving on to the next sub-task. Scenarios where an AI Agent can stop if configured properly:

- To provide users with certain choices

- To gather more information

- To clarify weird or incomplete requests

- To request more access, credentials, or tokens

Deploy Uncertainty Triggers and Escalations

Once you have hard-coded the validation rules and checkpoints, you must program the AI Agent’s threshold of doubt. This limit will tell the agent when it is ‘out of its depth/scope.’ It can be done by implementing a Confidence Scoring of 5%. If that error risk exceeds 5%, the agent must automatically “Self-Escalate” to a human.

Implement “Governance-as-Code”

Before an AI Agent can be granted complete freedom, its environment must be sandboxed. This is because organizations cannot afford to rely on their “good behavior”; they must enforce it through the infrastructure. Can be done by embedding policy guardrails directly into the API layer. For example, the AI Agent’s “Credit Card Tool” should have a hard-coded $1,000 limit that the LLM cannot override or bypass, even if its internal reasoning says so.

Standardize Handshake Protocols

If multiple AI Agents cannot communicate or work in harmony, autonomy will not be achieved. So the next step is to move from individual autonomous AI Agents to Agentic Swarms. This can be done by adopting universal Inter-Agent Communication Standards. You can consider it as the TCP/IP equivalent for Agentic AI. These standards determine how to transfer data from a Procurement Agent (e.g., OpenAI) to a Finance Agent (e.g., SAP) without human translation.

Establish Continuous Reflection Loop

The last step towards Agentic autonomy is to set up infinite learning loops that enable the agents to learn from their own mistakes. This can be done by deploying a Secondary “Critic” Agent whose sole job is to audit the primary agent’s logs at the end of each day. It identifies where the primary agent hesitated or where a human had to intervene.

Navigating The Transition Toward True Agent Autonomy

With this blog, we have explored how the majority of organizations have hit a glass ceiling in their pursuit of true agentic autonomy. It’s clear that individual AI Agents are doing well at reaching their goals, but they are still isolated, lack proper governance, and don’t have the ability to fix themselves, especially in the long run. In the next few years, you will see specialized AI Agent swarms with the right guardrails, governance frameworks, and human checkpoints. One problem still stands, though: creating an AI Agent that can really work on its own and handle the messy, high-stakes world of business operations without any help is a huge engineering problem. And we may still be years away from seeing ungoverned AI Agents handle critical-path business logic at scale. But, regardless of that, strategic partnerships with professional AI Agent development service providers can help organizations take the right steps in this direction. By designing scalable and resilient Agentic AI ecosystems tailored to their operations from scratch, these service providers can drastically reduce the risks.

Author:

Rohit Bhateja is the Director of Digital Engineering Services and Head of Marketing at SunTec India. In this dual leadership role, he brings over a decade of experience in digital marketing, along with deep expertise in customer acquisition and retention, marketing analytics, and innovation-led transformation. Recognized for his strategic vision and leadership excellence, Rohit has been honored as the “Most Admired Brand Leader” at the CMO Asia World Brand Congress and “Executive of the Year” by The CEO Magazine. A respected voice in digital transformation and marketing innovation, he continues to shape industry narrative around technology, strategy, and customer experience with an emphasis on keeping humans in the loop of AI-driven processes to accelerate measurable business outcomes.

FAQs

What is the primary difference between AI and an AI Agent?

Standard AI is reactive in nature. It provides a response or generates something based on a specific instruction or request. Conversely, an AI Agent is proactive and more goal-focused. It uses LLMs as a brain to plan and execute multi-step tasks.

Can AI agents legally sign contracts or execute financial trades?

While it is technically possible to enable an AI Agent to sign off on things, it is currently not done in practice because of the accountability vacuum and the consequences. Most firms hire AI Agent developers to build Human-on-the-Loop models where the AI Agent prepares the action, but a human provides the final authorization.

When will we see truly autonomous AI agents in the workplace?

High autonomy exists even today, but in very low-risk environments, like surveillance or basic monitoring. For complex and high-stakes business operations, it is still not there yet. The transition to the latter state depends on the development of universal interoperability standards and more world models that allow multiple AI Agents to communicate with each other.

Is ChatGPT an AI Agent?

ChatGPT was initially rolled out as a chatbot, but now, it has evolved into a complete AI Agent.